I love ChatGPT. I use Claude every single day. These tools have changed how I think, write, and build. But I would never use them to analyze a real estate deal. And if you are a realtor or investor who is, we need to talk.

The Uncomfortable Truth About Your Favorite AI

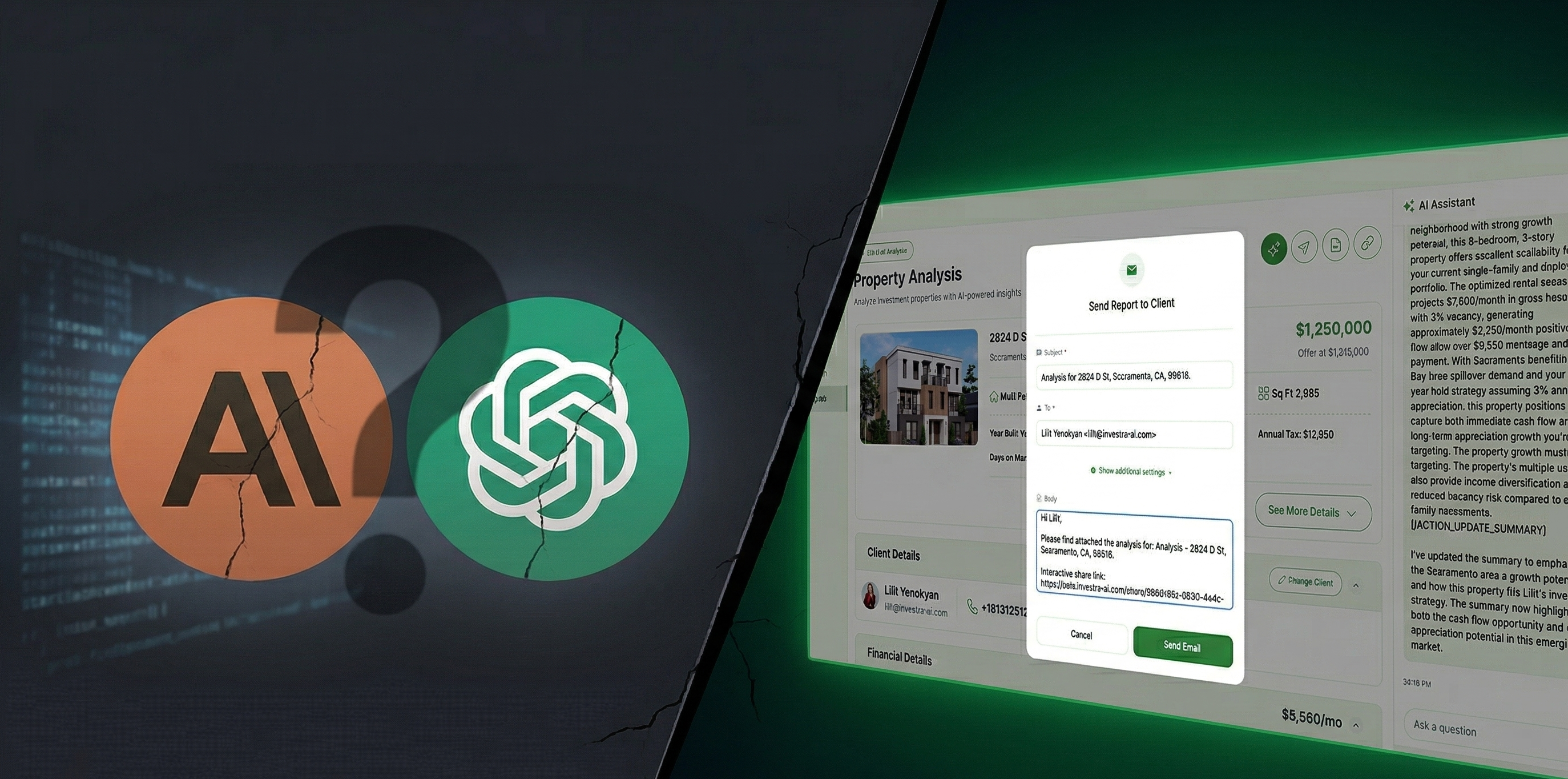

Here is something the AI hype cycle does not want you to think about: ChatGPT and Claude are not real estate tools. They are language models. They are extraordinary at synthesizing information, drafting content, and reasoning through problems. But when you paste a listing URL into ChatGPT and ask whether a property is a good investment, you are not getting analysis. You are getting a confident guess formatted to look like one.

These models do not have access to live MLS data. They do not pull real-time rental comps, local tax rates, current insurance estimates, or today's mortgage pricing. They are reasoning from general training data, not from the specific, current, location-level information that determines whether a deal actually pencils out. A cap rate that is off by half a point can be the difference between a cash-flowing asset and a property that bleeds money from month one. That is not a rounding error. That is a bad investment.

You Are Giving Away Your Client's Data. For Free.

This is the part that should alarm every realtor reading this. When you paste your client's financial details, investment goals, or property specifics into ChatGPT or Claude, where does that data go?

A 2025 Stanford study found that all six major AI chatbot providers use conversation data for model training by default. Some retain it indefinitely. AI data privacy risks surged 56% year-over-year according to Stanford's AI Index Report. And these are not abstract risks. If you cannot explain to your client exactly where their financial information goes when you paste it into a chatbot, you should not be pasting it.

The National Association of Realtors has published explicit guidance warning agents about AI data privacy traps. JLL warns that professionals should avoid feeding personal or confidential information into public AI platforms entirely. This is not theoretical. It is the industry telling you to stop.

General-Purpose AI Does Not Know Your Investor

Here is the deeper problem. ChatGPT does not know that your client is targeting 8% cash-on-cash returns in secondary markets with a five-year hold horizon. Claude does not know that the investor you are working with already owns three properties in Phoenix and needs geographic diversification. These models have no memory of the realtor's market expertise or the investor's specific financial position and goals.

They give you the same generic response whether you are a first-time investor buying a duplex or a fund manager evaluating a 50-unit portfolio. That is not intelligence. That is a search engine with better grammar.

What "Specialized" Actually Means

Investra is not ChatGPT with a real estate skin. It is purpose-built infrastructure. It connects directly to listing data, pulls verified rental comparables, incorporates location-specific tax and insurance assumptions, and models cash flow against current financing terms. Every projection is grounded in real, current data, not general knowledge.

More importantly, Investra knows context. It understands the investor's goals, the realtor's market, and the specific property being evaluated. It stress tests performance across rate scenarios and occupancy assumptions. It accounts for vacancy, property management, capital reserves, and debt service. When you run a deal through Investra, you are not getting an opinion. You are getting a financial model built on data you can actually defend to a client.

And your client's data stays where it belongs: inside a platform built with privacy and data protection as foundational commitments, not afterthoughts bolted onto a consumer chatbot.

The Numbers Do Not Lie

Despite over 80% of real estate professionals now using AI in some form, only 5% of firms have achieved their AI program goals. That gap is not because AI does not work. It is because most people are using the wrong AI for the job. They are trying to force general-purpose tools into specialized workflows and wondering why the results are mediocre.

Meanwhile, regulation is catching up. Colorado's AI Act will require impact assessments for AI used in housing decisions, with penalties up to $20,000 per violation per consumer. The era of casually pasting client data into chatbots and calling it analysis is ending, legally and professionally.

Use AI. Just Use the Right AI.

I am not anti-AI. I run an AI company. I use Claude to brainstorm, draft, research, and think. But when real money is on the line, when a client is trusting me or trusting their realtor with a six- or seven-figure decision, the tools need to match the stakes.

Use ChatGPT to research markets. Use Claude to draft your LOI. Use them for what they are genuinely great at. But do not confuse a fluent answer with an accurate one. And do not sacrifice your client's privacy for the convenience of a chat window.

The investors and realtors who will win over the next decade are not the ones using the most AI. They are the ones using the right tools for the right jobs.

Ready to analyze deals with data your clients can trust? 👉 Get Started with Investra